An open-source, LangGraph-powered advisor for Python, Node.js, and Java projects. Free, local-friendly, and easy to extend for your own workflow.

The Monday Morning Problem

If you maintain a project with even a handful of dependencies, you probably know this pattern. Monday morning, a batch of PRs is waiting for you:

chore(deps): bump flask from 3.0.0 to 3.1.0chore(deps): bump pydantic from 2.5.0 to 2.9.2chore(deps-dev): bump pytest from 8.0.0 to 8.3.3chore(deps): bump express from 4.18.2 to 4.21.0chore(deps): bump spring-boot-starter from 3.1.0 to 3.3.4

Dependabot is genuinely great at surfacing these. It does the hard work of noticing new versions exist and opening clean PRs for each one. But once the PR is open, the investigation is still on you. Open the changelog, read through the releases between old and new versions, look for breaking changes, check whether there’s a CVE involved, judge whether the new release is stable or whether you should wait a week.

That’s 15-20 minutes per PR, done carefully. Across a normal week it adds up to real hours, and the work is the same every time. Which is a pretty good signal that it’s the kind of work a tool can help with.

So I built one. It’s called DepAdvisor, it’s free and open source, and it uses an LLM to analyze each available update, weigh the risks, and generate a prioritized report you can actually act on. It runs on your machine (or in your CI) in about 90 seconds, and it’s designed to be extended for whatever your workflow needs.

Here’s what it does, what it looks like, and how to make it your own.

What It Actually Does

You point DepAdvisor at a project. It reads your dependency files, checks what updates are available, pulls in vulnerability data and changelogs, and hands each update to an LLM that classifies its risk and writes a short explanation. The output is a prioritized report that tells you exactly where to start.

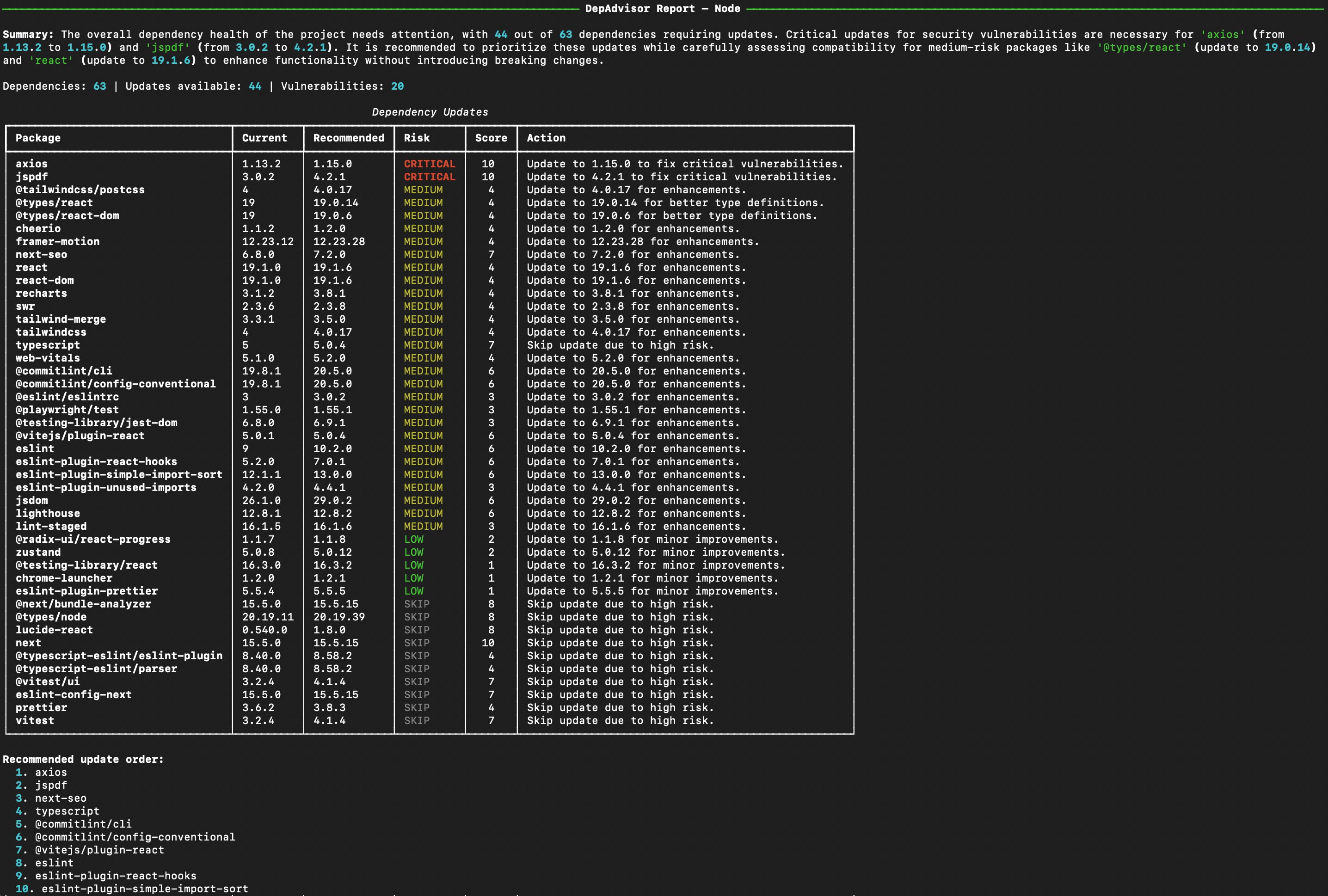

Here’s real output from running DepAdvisor on a Node.js/React project with 63 dependencies:

Every update gets four things: a risk level (CRITICAL, MEDIUM, LOW, or SKIP), a numerical score from 1 to 10, a recommended target version, and a short action statement. At the top you get an executive summary written by the LLM, and at the bottom you get a suggested update order so you know which PRs to merge first.

Notice what you’re not doing: opening each changelog, searching for breaking changes, cross-referencing CVE databases, or guessing whether a new version is stable. That’s all been done for you.

The “SKIP” Tier Is the Interesting Part

Most dependency tools treat “new version available” as “you should update.” DepAdvisor is more cautious, and that turns out to be useful.

Look at that output again. next has an update available from 15.5.0 to 15.5.15. Most tools would just say “merge the PR.” DepAdvisor scored it 10 and recommended SKIP. Why? Because in this case the recommended version had just been released and hadn’t yet picked up community adoption signals. Same with vitest 4.1.4 (a major version bump) and @vitest/ui 4.1.4.

This is something most automated update tools won’t do by default. They see “new version available” and create a PR. DepAdvisor factors in release age, major-vs-minor-vs-patch differences, changelog content, and vulnerability data before making a recommendation. Sometimes the right answer is “wait two weeks and let someone else find the bugs.”

Multi-Ecosystem by Default

Most teams don’t live in a single language. A typical modern project might have a Python backend, a Node.js frontend, and Java microservices somewhere in the stack. DepAdvisor supports all three out of the box:

| Ecosystem | Dependency Files | Registry | Vulnerability Source |

|---|---|---|---|

| Python | requirements.txt, pyproject.toml (PEP 621 + Poetry) |

PyPI | OSV.dev (PyPA advisory database) |

| Node.js | package.json |

npm | OSV.dev (GitHub Advisory Database) |

| Java | pom.xml |

Maven Central | OSV.dev (GitHub Advisory Database) |

The ecosystem is auto-detected based on which dependency files exist in the project, or you can force it with --ecosystem. The same risk analysis, the same vulnerability checks, the same output format, regardless of language.

One nice consequence of the design: adding a new ecosystem (Go, Rust, .NET, Ruby) is mostly about writing a parser and a registry client. The agent pipeline itself doesn’t need to change. If adding an ecosystem sounds interesting, it would be a great first contribution. More on that later.

Try It on Someone Else’s Code First

Most tools ask you to install them, configure them, and point them at your own project before you know if they’re useful. DepAdvisor takes a git URL directly, so you can try it on literally anything before committing.

Want to see what it says about a well-known project?

pip install depadvisor

depadvisor analyze https://github.com/pallets/flask.git

It clones the repo to a temp directory, analyzes it, cleans up after itself, and shows you the report. Takes about 90 seconds. If the output tells you something useful, you know the tool is worth installing into your own workflow.

This has been the single best way for people to figure out quickly whether DepAdvisor is useful for them. No commitment, no config, just a report on a project they already know.

Local, Cloud, or Both

By default, DepAdvisor runs on Ollama, a free local LLM runtime. The model (qwen3:8b) runs entirely on your machine. Your code never leaves your laptop, no API keys, no per-run cost, no data sent anywhere.

For teams who prefer cloud LLMs or want faster/higher-quality analysis, OpenAI is supported via the same interface:

# Local Ollama (default): free, private, slower

depadvisor analyze .

# OpenAI: faster, sharper analysis

depadvisor analyze . --llm openai/gpt-4o-mini

A practical note on this: OpenAI is faster and produces more nuanced risk assessments. An analysis that takes 60-90 seconds locally finishes in 15-20 seconds on GPT-4o-mini, and the reasoning tends to be sharper. If you have an API key and you’re analyzing non-sensitive code, it’s probably the better default for daily use.

Ollama is the right choice when privacy matters (private codebases, regulated environments) or when you want zero cost and zero external dependencies. It still produces genuinely useful output, just expect to wait a bit longer.

Dropping It Into CI/CD

The thing I actually use DepAdvisor for is making Monday mornings less busy. Here’s a GitHub Actions workflow that runs weekly and posts a report as an issue comment:

name: Weekly Dependency Review

on:

schedule:

- cron: '0 9 * * 1' # Monday 9am

workflow_dispatch:

jobs:

review:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- run: pip install depadvisor

- run: |

depadvisor analyze . \

--llm openai/gpt-4o-mini \

--format github-comment \

--output report.md \

--fail-on critical

env:

OPENAI_API_KEY: ${{ secrets.OPENAI_API_KEY }}

- uses: peter-evans/create-or-update-comment@v4

if: always()

with:

issue-number: 1

body-path: report.md

Every Monday at 9am, you get a fresh comment on a tracked issue telling you what’s critical, what’s recommended, and what to leave alone this week. The --fail-on critical flag is the CI-friendly piece. It exits with code 1 if any critical-risk updates are detected, so you can block merges until they’re addressed.

The same principle works in GitLab CI, Jenkins, Azure DevOps, or anywhere else you can run a Python command. There’s also an HTTP API mode (depadvisor serve) for teams who want to integrate it into a dashboard or internal tool.

How It Works (The Quick Tour)

If you’re curious about what’s going on under the hood, here’s the short version.

DepAdvisor is built with LangGraph, the agent orchestration framework from the LangChain team. The pipeline flows through six nodes with conditional routing between them:

| Node | What It Does | Uses the LLM? |

|---|---|---|

parse_deps |

Reads requirements.txt, package.json, pom.xml, etc. |

No |

check_updates |

Queries PyPI, npm, and Maven Central for available versions | No |

fetch_vulns |

Queries OSV.dev for known CVEs | No |

fetch_changelogs |

Fetches GitHub release notes for packages with updates | No |

analyze_risk |

Classifies each update (critical/medium/low/skip) with reasoning | Yes |

generate_report |

Synthesizes the prioritized summary you see at the top of the output | Yes |

One design choice worth mentioning: most of the work is not LLM work, and that’s intentional. Parsing a pom.xml isn’t an AI problem. Comparing semver versions isn’t an AI problem. Calling the npm registry isn’t an AI problem. The LLM shows up only in the two places where reasoning over unstructured data is genuinely needed, classifying risk based on changelog content, and writing the final summary.

This matters for reliability. Every deterministic node is a node you can unit test, a node that runs in milliseconds, and a node that will never hallucinate. The LLM acts as an intelligence layer on top of reliable data pipelines, not as a replacement for them.

The graph has two conditional edges: one that skips the expensive LLM analysis if no updates are found, and one that retries the risk analysis if the LLM returns something malformed. And if you enable LangSmith tracing via environment variables, every run produces a visual trace of every node execution, every LLM call, and every decision. Makes both debugging and ongoing improvement much easier.

If you want to read the code, the full agent implementation lives in src/depadvisor/agent/ in the repo.

What DepAdvisor Doesn’t Do

Being upfront about the limits here, because tools that claim to do everything usually do nothing well.

It doesn’t auto-create PRs. Dependabot and Renovate already do that well. DepAdvisor is complementary: it tells you what to do with the PRs those tools open, not replace them.

It doesn’t update your lockfile. It won’t run pip install -U or npm update for you. The actual update step is yours.

It’s not a full security scanner. It catches known CVEs from OSV.dev, which is a strong source, but dedicated tools like pip-audit or Snyk have broader coverage. Use both if security is a top priority.

It can be wrong. It’s an LLM making judgments based on data. If you’re about to make an irreversible decision, it’s still worth spot-checking its reasoning against the actual changelog. Treat it like a very well-read junior engineer: mostly right, occasionally wrong, always worth the time saved.

Get Started (Three Minutes)

# Install with pip

pip install depadvisor

# Or install with UV (faster)

uv tool install depadvisor

# Install Ollama for local LLM (or skip this and use --llm openai/gpt-4o-mini)

brew install ollama # macOS

# or: curl -fsSL https://ollama.com/install.sh | sh # Linux

ollama pull qwen3:8b

# Run it

depadvisor analyze .

# Or try it on a project you don't own

depadvisor analyze https://github.com/pallets/flask.git

This Is a Starting Point, Not a Finished Product

DepAdvisor is an initiative, not a finished product, and that’s deliberate. It solves a real problem I kept running into, but the shape of “dependency update advisor” looks different for every team. Maybe you want it to ignore certain packages, score risk differently, integrate with your internal ticketing system, or support ecosystems it doesn’t cover yet. That’s all possible, and the codebase is structured to make those customizations straightforward.

A few specific areas where help or your own fork would be really valuable:

- More ecosystems. Go (

go.mod), Rust (Cargo.toml), .NET (*.csproj), and Ruby (Gemfile) would all fit cleanly into the existing architecture. The parsers and registry clients use abstract base classes designed for extension, so adding a new ecosystem is mostly about implementing two interfaces and writing tests. Great first contribution. - GitHub Action. A proper GitHub Action wrapper would make DepAdvisor a one-line addition to any workflow.

- Dependency grouping. Related packages (e.g.,

fastapi+pydantic+starlette) should really be analyzed together since updating one often requires updating the others. Right now they’re analyzed independently. Interesting design problem. - Custom risk policies. Right now the risk logic is encoded in the LLM prompt. Making it possible to plug in custom policy rules (e.g., “always skip major bumps for this specific package”) would be valuable for teams with specific constraints.

- Prompt iteration. If the LLM’s analysis feels off on a specific case, opening an issue with the input and output helps a lot. Prompt improvements compound quickly when driven by real examples.

- CI/CD examples for other platforms. Worked examples for GitLab CI, Jenkins, Azure DevOps, and CircleCI would broaden the reach.

The CONTRIBUTING.md in the repo has development setup instructions, and the code is structured to make extension straightforward. Fork it, extend it, ship it in your own tools. Issues and PRs are genuinely welcome, and if you just want to tell me what’s missing, open an issue and I’ll read it.

Links

- Install:

pip install depadvisororuv tool install depadvisor - GitHub: github.com/chaubes/depadvisor

- PyPI: pypi.org/project/depadvisor

Give it a try and let me know what you think. Good feedback, bad feedback, weird edge cases, suggestions for features I haven’t thought of. It all helps. And if you build something interesting on top of it, please share. I’d love to see what people do with this.